fusilli

Don’t be silly, use fusilli! 🍝

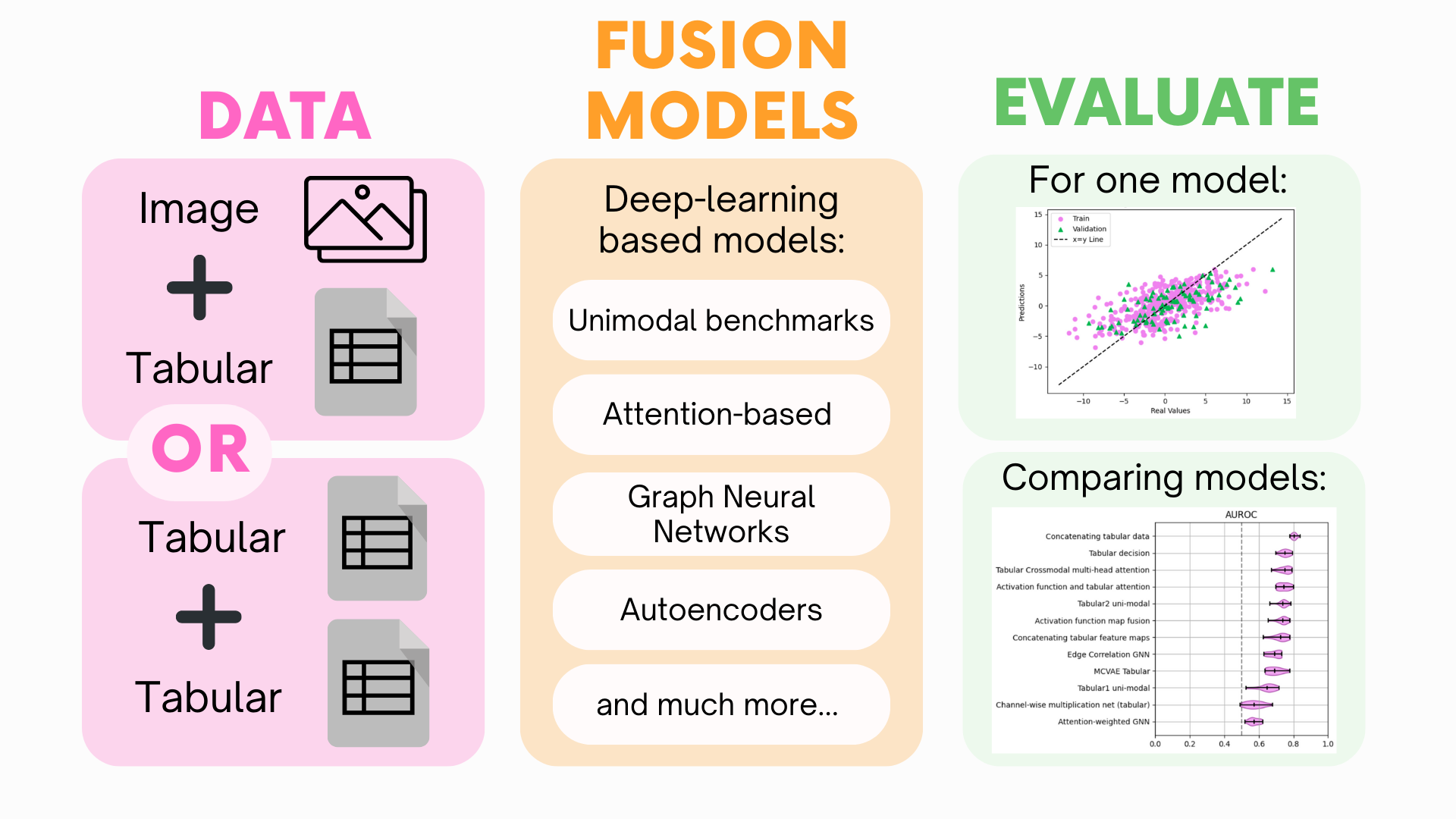

Welcome to fusilli! Have you got multimodal data but not sure how to combine them for your machine learning task? Look no further! This library provides a way to compare different fusion methods for your multimodal data.

Why would you want to use fusilli?

Problem |

Solution |

|---|---|

You have a dataset that contains multiple modalities. 🩻 📈 |

Either two types of tabular data or one type of tabular data and one type of image data. Ever thought that maybe they’d be more powerful together? Fusilli can help you find out if multimodal fusion is right for you! ✨ |

You’ve looked at methods for multimodal fusion and thought “wow, that’s a lot of code” and “wow, there are so many names for the same concept”. 🤔 🆘 |

So relatable. Fusilli provides a simple way for comparing multimodal fusion models without having to trawl through Google Scholar! ✨ |

You’ve found a multimodal fusion method that you want to try out, but you’re not sure how to implement it or it’s not quite right for your data. 😵💫 🙌 |

Fusilli allows the users to modify existing methods, such as changing the architecture of the model, and provides templates for implementing new methods! ✨ |

🌸 Getting Started 🌸

🌸 Further Guidance 🌸

🌸 Tutorials 🌸

🌸 API Reference 🌸

Fusion models for tabular-tabular, tabular-image, and unimodal fusion. |

|

Data loading classes for multimodal and unimodal data. |

|

Contains the train_and_test function: trains and tests a model and, if k_fold trained, a fold. |

|

This module contains classes and functions for evaluating trained models (i.e. plotting results from training). |

|

fusilli.utils includes utility functions and modules such as methods to for modifying the fusion models' architectures, for choosing which fusion models to run based off their characteristics, for initialising and altering training/logging parameters, and for saving the loss curves. |